Field experiments are often risky and result in unexpected outcomes. Take the example of our recent experience in Ghana. My colleagues, and I are in the early stages of evaluating an intervention that aims to improve farmer welfare by promoting integrated soil fertility management through the training of local extension agents. In February 2016, we pre-tested our baseline survey for the evaluation over a period of two days. The survey includes an exercise adapted from Tanaka, Camerer and Nguyen (2010) to measure respondents’ risk preferences. The game is played with real money to elicit incentive-compatible risk preferences. The rules of the game are:

The respondent receives an initial endowment of GHC 12 (~ USD 3.6) which can be used to play a game in which he/she can win a maximum of GHC 24 (including the initial endowment of GHC 12) or lose some of the initial endowment and leave with a minimum of GHC 2. Respondents who choose to play are asked to choose between Option A and Option B for each of the 11 rounds depicted in Table 1 below.

Once the respondents’ selection for each round is noted down, the respondent draws a random number from 1 to 11 out of an opaque bag. The round that corresponds to the random number drawn is then played. If the respondent has chosen Option A in the randomly selected round, then he/she keeps the GHC 12 endowment. If the respondent has chosen Option B in the randomly selected round, then the enumerator tosses a coin to ascertain whether the respondent goes home a winner (with GHC 24) or a loser (with some amount between GHC 2 and GHC 12).

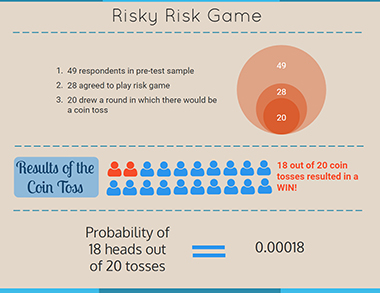

We have data for 49 respondents from our pre-test exercise. Of the 49 respondents surveyed, 28 (57 percent) agreed to play the game. Of the 28 who agreed to play, 8 (29 percent) landed on a round in which they had chosen a fixed payout and went home with GHC 12. The remaining 20 drew a round that resulted in a coin toss. Of the 20 who played a toss, 19 (95 percent) received a payout of GHC 24 and one (5 percent) received GHC 12. One enumerator confessed that her respondent actually lost the toss but she gave him GHC 24 as he threatened her with a curse. Thus, to the best of our knowledge, 18 (90 percent) respondents won and 2 (10 percent) lost the coin toss.

The probability of 18 out of 20 coin tosses resulting in heads is:

20C18 * (0.5)18 * (0.5)2 = 0.00018

The outcome of 18 heads out of 20 coin tosses is possible though highly improbable. Being economists with a robust faith in probability, we began to doubt everything. Did the enumerators take pity on the respondents and fake a win so they would get more money or maybe they were tossing the coins ‘wrong’? Did the enumerators collude with the respondents and split the payout? Are all Ghanaian coins loaded?

We politely confronted the enumerators regarding the possible falsification of results of the coin toss. Besides the one case mentioned earlier, all enumerators claimed that their coin toss resulted in heads. The enumerators also seemed pretty confident in their coin tossing abilities. On further reflection, collusion between enumerators and respondents seemed unlikely. The pre-test was carried out in one large common area where two to three supervisors, including myself, were making rounds to check on the enumerators – collusion would have been far too risky. Lastly, I confirmed that Ghanaian coins are in fact not loaded.

Was this a freak accident or were the results actually falsified by the enumerators (who also happen to be terrific actors!)? We never figured out why this happened but the improbability of it all convinced us to drop the ‘real money’ component of the game and conduct it as a hypothetical exercise. It was probably the only option we had given the information available to us.

Where does all this leave us? From my perspective, here are some possible ways to deal with such situations in the future. First, ensure that enumerators clearly understand the instructions to the game. In this case, if I could go back in time, I would have spent more time explaining that the coin must be tossed once and only once and that there are no do overs. Second, maybe a coin toss is not the best way to determine 50-50 probability in the field. A bag with 10 balls – 5 green and 5 red – might have been better. Third, pre-test different versions of contentious games. We detected these skewed winnings at the end of the first day, attributed the results to a freak accident, and gave the enumerators another chance on day two. In hindsight, using day two to test the game without money or adding a third pre-test day to test the game without money might have been a better strategy. There is no way to know for sure whether any of these strategies would have worked, but the important lesson here is to spend more time pre-testing and understanding how such games are received in different contexts.