Large language models (LLMs)—artificial intelligence systems trained on vast collections of text and other data that can interpret user prompts, summarize information, and generate natural-language outputs—hold the potential to give policymakers site-specific, up-to-date agricultural knowledge and insights. Yet LLMs have many applications beyond retrieving and summarizing knowledge. They are at the core of AI agents: programs or systems that autonomously perform diverse tasks, engaging with an array of online tools and data. AI agents are extraordinarily flexible; they have been used to design (and in some cases replace) office workflows, to diagnose medical conditions, and to carry out research, among other things.

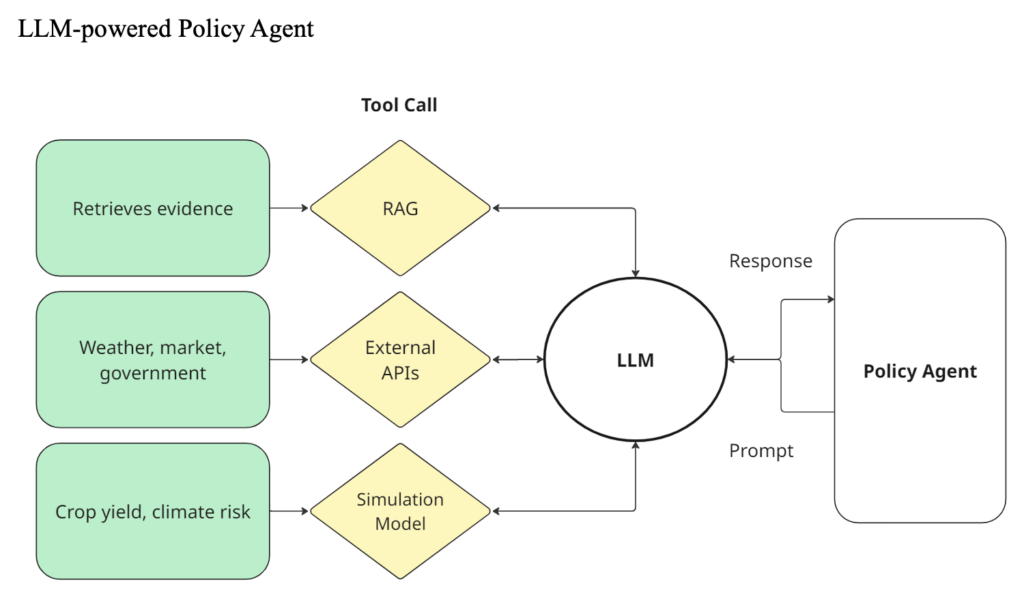

AI agents have the potential to be important tools in policy development, and in agrifood policy in particular. Figure 1 gives an overview of how this works in concept: the policy agent sends prompts to the LLM; the LLM determines what information is needed; and the agent then carries out the required tool calls—retrieving documents, querying external APIs, or running simulation models—before feeding the results back into the LLM for interpretation. Through this iterative loop, LLM-powered agents can help policymakers explore scenarios, test assumptions, and generate insights in real time.

Figure 1

Against this backdrop, a group of agricultural researchers and experts convened at the 2025 CGIAR Gender in Food, Land, and Water Systems Conference in Cape Town, South Africa, October 7-9 to unpack the opportunities and challenges of using AI agents to support policy processes. Building on similar workshops held with agricultural extension staff around Africa, we explored how human scientific advisors interpret questions, probe for intent, and translate evidence into actionable guidance—and what these activities reveal about where AI systems may add value or face limitations. These discussions are part of the AgriLLM project, aimed at equipping the agricultural community, including researchers, policymakers, and smallholder farmers, with tailored AI tools and models.

Defining a scientific advisor

To jump-start discussions, we asked participants to describe a scientific advisor in just one or two words. These included “evidence explainers,” “distillers,” and “telegrams,” highlighting the skill required to translate lengthy, complex research into concise policy briefs or elevator pitches that clearly convey:

- What? (topic)

- So what? (relevance)

- Now what? (policy action)

Building on this idea, participants also described scientific advisors as “power adapters”—translating complex, “high-voltage” research signals into clear, steady “current”suitable for implementation.

Advisor-policymaker interactions

The first phase of the workshop focused on how advisors interpret a policy question. Such questions are not all the same: some are tactical and immediate—such as “What policy lever can we adjust to stabilize maize prices this season?”—while others are strategic and long-term, like “How can we reduce market volatility while protecting smallholder incomes?” Advisors must also account for the policymaker’s underlying values (e.g., policy priorities, political orientation), as well as the constraints and opportunities that shape the broader decision-making context.

Participants described several analytical dimensions that shape how advisors interpret and respond to policy questions:

- Policy process. Research can enter the policy process at multiple stages. Recognizing which stage an advisor is targeting helps set realistic expectations about the type and extent of research influence.

- Policy action. Policy change involves many categories of action and a wide array of actors—different ministries and agencies, multiple levels of government, and distinct branches of authority—all of which may need to be considered in policy development and design, outreach and communication.

- Power and politics. Policymaking is shaped by visible and hidden forms of power. Election cycles, political narratives, and partisan agendas influence which issues rise to the surface and how evidence is interpreted. Two policymakers with opposing ideologies may pose the same question but with very different intentions—and use the same evidence either to defend or to critique a policy.

- Audience and actors. Advisors must map who has a stake in the issue—proponents, opponents, and those who are neutral—and understand their interests and incentives. These alignments interact with political dynamics, shaping the feasibility of different policy options.

- Framing. The same evidence can be framed in different ways depending on the audience. For instance, advice to implement fortified foods could be framed as “food fortification is a cost-effective way to address vitamin A deficiencies” to a finance ministry, vs. “access to healthy foods should be a human right” to a civil society organization or a donor. Advisors select frames that resonate with the political ideology and priorities of the audience.

A complicated path

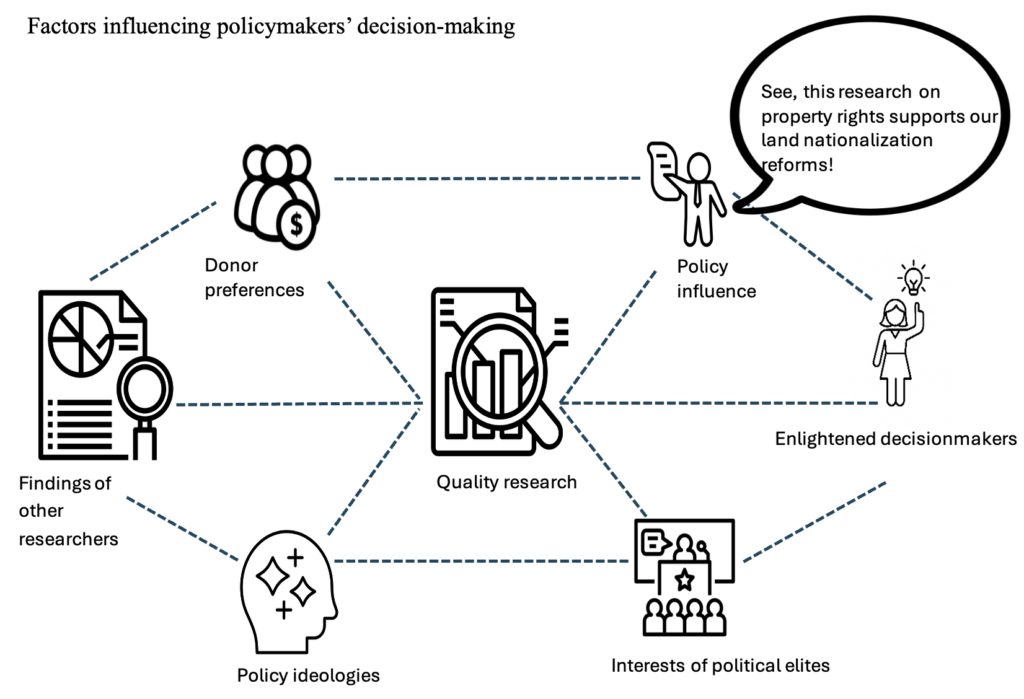

These discussions of the diverse, intersecting pathways in almost any kind of policy development—illustrated in Figure 2—showed that real-life scientific advice and policy influence is an inherently complex and often messy process. In stark contrast, the translation of knowledge into advice in Figure 1 is relatively structured and linear, highlighting the gap between technical workflows and the realities of policymaking.

Figure 2

Design implications

Bringing these lessons to bear on the practicalities of designing an AI policy agent, participants identified several implications for how such systems should be built and deployed.

- Account for hidden intentions and cognitive biases. Policy decisions are rarely made on evidence alone. Communication science’s “iceberg model” reminds us that only a small portion of intentions are visible; a decision-maker’s beliefs, motivations, and values lie beneath the surface. AI systems should prompt users to clarify and explicitly state their intent, values, and constraints—reducing the risk that the model reinforces confirmation bias by supplying only the evidence that supports a user’s pre-existing narrative.

- Ensure outputs are salient, legitimate, and credible. Human advisors rely on relational cues, lived experience, and tacit knowledge to “read the room” and judge what is relevant. LLM-powered agents expected to navigate the nuances of policy must be designed to support the criteria identified by David Cash and colleagues: salient (relevant to context), legitimate (making reasoning steps transparent), and credible (clearly distinguishing evidence from inference or interpretation).

- Address structural biases in training data. LLMs are predominantly trained on written, digitized, and Western-centric datasets—and often developed by teams outside the regions where the tools will be deployed. Thus, they may omit African linguistic, cultural, and epistemic contexts; this in turn can lead to outputs and ultimately policy recommendations that do not fit those contexts. Design must therefore prioritize inclusion of regionally-grounded datasets, participatory curation involving African researchers and institutions, and guardrails for identifying when the model lacks relevant cultural grounding.

- Incorporate social and cultural nuance. Across much of Africa, communication is deeply oral, metaphorical, and context-dependent. To avoid misinterpretation or overly literal responses, AI policy agents should be fine-tuned on locally relevant linguistic forms, allow users to correct or contextualize the system’s interpretations, and avoid overconfidence when responding to culturally situated or norm-laden questions.

The way forward

The workshop made clear that while AI agents can structure information and reasoning with remarkable efficiency, real policymaking is shaped by political incentives, social norms, and contextual judgments that no model can fully infer from text alone. Closing this gap requires rethinking design, not just improving algorithms.

Participants emphasized that effective AI for policy must be built with policymakers, not simply delivered to them. Systems need grounding in local data, cultural and linguistic nuance, and the realities of how evidence is interpreted within political environments. They must also surface uncertainty, prompt for missing context, and avoid reinforcing users’ existing biases.

The goal is not to replace scientific advisors but to augment them—providing rapid synthesis and decision support while leaving space for human judgment and negotiation. With human-centered design, participatory development, and attention to equity and representation, AI agents can become useful companions in the policy process rather than generic information engines.

In short, the path forward lies in co-created, context-aware AI tools that respect the complexity of policymaking and help deliver advice that is credible, relevant, and actionable.

Kristin Davis is a Senior Research Fellow with IFPRI’s Natural Resources and Resilience (NRR) Unit based in South Africa; Eliot Jones-Garcia is an NRR Senior Research Analyst based in Washington, D.C.; Hlamalani Ngwenya holds the Research Chair in Communication for Innovation at the University of the Free State, Bloemfontein, South Africa; Arielle Rosenthal is a Manager with Shared Planet; Amanda Grossi is Research Team Leader for Climate Action and Resilient Food Systems at the Alliance of Bioversity & CIAT; Mia Speier is a Senior Consultant, Digital Rights with Shared Planet. Opinions are the authors’.

The authors thank Caroline Muchiri, Youthika Chauhan, Miranda Morgan, and Aayushi Malhotra for their input at the Gender Conference. Special thanks to Danielle Resnick and the Feed the Future Innovation Lab for Food Security Policy Research, Capacity & Influence for material on the policy process.

ChatGPT was used iteratively during the planning, drafting, and revision of this blog post. The authors provided original content and the text was carefully reviewed and edited for publication.